For years, the cybersecurity community debated whether artificial intelligence would eventually be weaponized to discover and exploit software vulnerabilities autonomously. That debate is over.

On May 12, 2026, Google’s Threat Intelligence Group (GTIG) published its latest AI Threat Tracker — and buried within its 33 pages is a finding that should alarm every industrial security defender: a cybercrime group has used AI to develop a working zero-day exploit, marking the first confirmed case of AI-generated vulnerability weaponization in the wild.

But the zero-day is just the beginning. The same report reveals autonomous malware that uses AI to navigate devices without human oversight, nation-states industrializing vulnerability discovery through AI agents, and a new class of polymorphic malware that uses LLMs to rewrite itself in real time.

The implications for OT/ICS environments are profound.

The First AI-Generated Zero-Day

Google’s finding is unambiguous: a prominent cybercrime group leveraged AI to discover and weaponize a zero-day vulnerability in a popular open-source web-based system administration tool. The exploit, implemented as a Python script, bypasses two-factor authentication (2FA) by exploiting a semantic logic flaw — a hardcoded trust assumption that the developers never intended as a security bypass.

What makes this significant is the type of vulnerability AI found. Traditional scanners and fuzzers excel at detecting memory corruption bugs, improper input sanitization, and crash-inducing inputs. But this was different — a high-level logic flaw where the developer hardcoded an exception to their own 2FA enforcement. The AI model read the developer’s intent, identified where the enforcement logic contradicted its own hardcoded exceptions, and surfaced a “dormant logic error that appears functionally correct to traditional scanners but is strategically broken from a security perspective.”

Google identified the exploit as AI-generated based on telltale signatures: educational docstrings throughout the code, a hallucinated CVSS score, structured textbook-Pythonic formatting characteristic of LLM training data, and a suspiciously clean ANSI color class. The threat actors planned mass exploitation, but GTIG worked with the affected vendor to responsibly disclose and patch the vulnerability before it could be deployed at scale.

The vendor and tool were not named publicly.

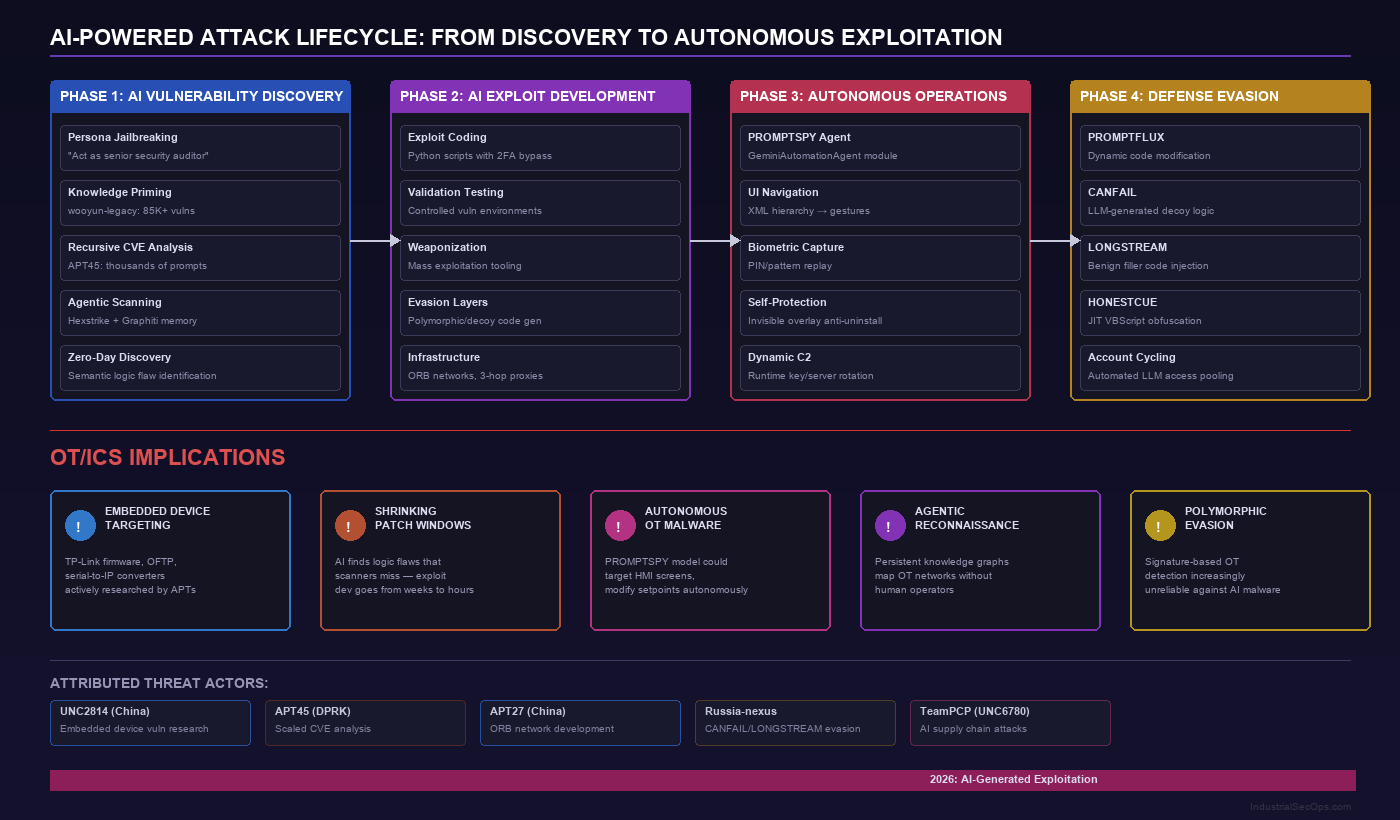

Nation-States Are Industrializing AI-Powered Vulnerability Research

The GTIG report reveals that state-sponsored actors from China and North Korea have moved well beyond experimental AI use into systematic, scaled vulnerability research programs.

China’s UNC2814 employs persona-driven jailbreaking against commercial AI models, directing them to act as “senior security auditors” or “C/C++ binary security experts” to bypass safety guardrails. Their targets are particularly concerning for industrial defenders: TP-Link router firmware and Odette File Transfer Protocol (OFTP) implementations — protocols commonly found in manufacturing supply chains.

In a more sophisticated approach, Chinese threat actors have integrated a specialized vulnerability repository called “wooyun-legacy” — a Claude code skill plugin containing a distilled knowledge base of over 85,000 real-world vulnerability cases from the WooYun bug bounty platform (2010-2016). By priming AI models with this historical vulnerability data, attackers enable in-context learning that steers the model to identify logic flaws that base models would otherwise miss.

North Korea’s APT45 has adopted a brute-force approach: sending thousands of repetitive prompts that recursively analyze different CVEs and validate proof-of-concept exploits. “This results in a more robust arsenal of exploit capabilities that would be impractical to manage without AI assistance,” Google noted.

Perhaps most alarming, a suspected PRC-nexus actor deployed agentic tools — Hexstrike and Strix — against a Japanese technology firm and a prominent East Asian cybersecurity company. Hexstrike used the Graphiti memory system, a temporal knowledge graph, to maintain a persistent model of the attack surface, enabling the agent to autonomously pivot between reconnaissance tools based on its own internal reasoning. Strix automated vulnerability identification and validation with minimal human oversight.

This is no longer research assistance — it’s autonomous offensive operations.

PROMPTSPY: The Autonomous AI Malware

While a zero-day exploit developed with AI assistance is alarming, PROMPTSPY represents something potentially more dangerous: malware that uses AI to think and act on its own during an intrusion.

PROMPTSPY is an Android backdoor that integrates a module called “GeminiAutomationAgent.” Here’s how it works:

- The malware serializes the device’s visible UI hierarchy into XML using the Accessibility API

- This payload is sent to Google’s gemini-2.5-flash-lite model via HTTP POST

- The model analyzes the spatial geometry of the UI and returns structured JSON commands

- PROMPTSPY parses these commands and simulates physical gestures — clicks, swipes — at calculated coordinates

- The cycle repeats autonomously, allowing the malware to navigate the device like a human user

The implications are staggering. PROMPTSPY doesn’t just exfiltrate data — it uses the device. It can capture biometric authentication patterns and replay them later. It renders invisible overlays over the “Uninstall” button to prevent removal. If the device goes idle, Firebase Cloud Messaging relaunches the backdoor silently.

The entire C2 infrastructure — including Gemini API keys and VNC relay servers — can be rotated at runtime without redeploying the malware. The developers clearly anticipated defensive countermeasures.

AI-Augmented Evasion: Malware That Rewrites Itself

The report also documents a new generation of AI-powered evasion techniques targeting Ukrainian organizations:

- CANFAIL: Contains LLM-generated decoy code with developer comments explicitly noting “these blocks are not used” — filler content designed to dilute malicious signatures

- LONGSTREAM: 32 instances of daylight-saving-time queries scattered throughout as benign-looking noise — activity that “appears functionally correct” to automated analysis

- PROMPTFLUX: Dynamic code modification using the Gemini API for just-in-time self-modification

- APT27: Used AI to accelerate development of an ORB network management tool with 3-hop multi-proxy anonymization using residential IP addresses from mobile SIM cards

These aren’t theoretical capabilities — they’re deployed in active operations against real targets.

What This Means for OT/ICS Security

For industrial defenders, this GTIG report demands an immediate reassessment of threat models:

Shrinking Patch Windows: If AI can discover logic flaws that traditional scanners miss — and do so at scale — the window between vulnerability existence and exploitation narrows dramatically. OT environments with 6-12 month patch cycles are increasingly exposed.

Embedded Device Targeting: UNC2814’s specific focus on TP-Link firmware and OFTP implementations signals growing nation-state interest in the networking equipment that connects OT environments. Serial-to-IP converters, protocol gateways, and edge routers are prime targets for AI-accelerated vulnerability research.

Autonomous Lateral Movement: If PROMPTSPY-style AI integration reaches OT-specific malware — imagine an autonomous agent navigating HMI screens, modifying setpoints, or disabling safety systems without human operators issuing commands — the impact on safety-critical systems could be catastrophic.

Agentic Reconnaissance: Tools like Hexstrike with persistent memory graphs represent the future of OT network reconnaissance. An agent that maintains state about discovered assets, tested vulnerabilities, and network topology can systematically map and exploit industrial networks far faster than human operators.

Defense Evasion at Scale: AI-generated decoy code and polymorphic malware means signature-based detection (still dominant in many OT environments) becomes increasingly unreliable.

Defensive Recommendations

- Assume accelerated exploitation timelines: Treat every disclosed OT vulnerability as potentially exploitable within days, not months. Prioritize compensating controls over patching schedules.

- Deploy behavioral monitoring: Signature-based detection cannot keep pace with AI-generated polymorphic malware. Invest in network behavior analysis that detects anomalous communication patterns regardless of payload signatures.

- Harden embedded devices: Audit serial-to-IP converters, protocol gateways, and edge networking equipment. Disable unnecessary services, change default credentials, and segment these devices from both IT and core OT networks.

- Monitor AI API access: If your organization uses AI tools, monitor for unauthorized API key usage and unusual model invocations that could indicate compromised development environments.

- Implement OT-specific zero trust: Assume that any device with network connectivity could be compromised by autonomous malware. Enforce strict communication policies at the protocol level.

- Plan for autonomous threats: Update incident response playbooks to address scenarios where malware makes real-time decisions. Response timelines must account for AI-speed lateral movement.

The Bigger Picture

Google’s report documents a clear progression: from AI-assisted research (2024), to AI-augmented development (2025), to AI-generated exploitation and autonomous operations (2026). Each step has been faster than analysts predicted.

The cybersecurity industry has long warned that AI would eventually favor attackers. That future isn’t approaching — it’s here. For OT/ICS environments, where safety systems protect human lives and critical infrastructure serves millions, the stakes of this shift cannot be overstated.

The question is no longer whether AI will be used to attack industrial systems. It’s when — and whether defenders will be ready.

Sources: Google Threat Intelligence Group, “GTIG AI Threat Tracker: Adversaries Leverage AI for Vulnerability Exploitation, Augmented Operations, and Initial Access” (May 12, 2026); SecurityWeek, “Google Detects First AI-Generated Zero-Day Exploit” (May 11, 2026)